1. The Agentic Moment

Something shifted in 2025.

For years, AI lived inside a text box. You typed a question, you got an answer. Useful, but passive. The AI waited for you. It couldn't browse a website, file a ticket, write and run code, or coordinate a sequence of steps to get something done.

That's no longer the case. AI agents, systems that can plan, act, use tools, and operate autonomously across multi-step workflows, have moved from research papers to production software. Coding agents are writing and shipping real code. Customer service agents are resolving tickets end-to-end. Legal agents are reviewing contracts at scale. The numbers behind this shift are significant: over $10 billion in venture capital invested in agentic AI startups in 2025, revenue trajectories growing at rates never seen in SaaS history, and token consumption patterns that suggest we're only at the beginning of an exponential curve.

This post is our attempt to map the landscape, from how agents are defined, to who's building them, to how much compute they consume, to where they're still falling short. If you're building in AI, investing in AI, or just trying to understand where this is all going, this is meant to give you a comprehensive, data-grounded picture of where agentic AI stands today.

Figure 1: The evolution of AI systems, from passive chatbots to autonomous multi-agent systems (2023 to 2026)

2. What Makes AI "Agentic"?

Before we dive into the data, we need to establish what we actually mean by "agentic AI," because the term has been stretched to the point where it risks meaning everything and nothing.

There is no single agreed-upon definition. But a working consensus has emerged across the major AI labs and industry analysts, converging on several key dimensions.

OpenAI defines agenticness as the degree to which a system can "adaptably achieve complex goals in complex environments with limited direct supervision." They treat it as a spectrum, not a binary, measured across four axes: goal complexity, environment complexity, adaptability, and autonomy.

Anthropic draws a more architectural line, distinguishing workflows (LLMs orchestrated through predefined code paths) from agents (systems where LLMs dynamically direct their own processes and tool usage).

Google Cloud frames agentic AI through a five-step operational cycle: perceive, reason, plan, act, reflect.

Gartner, who named agentic AI their #1 strategic technology trend for 2025, warns explicitly against "agentwashing," the practice of labeling basic AI assistants as agents when they lack genuine autonomy.

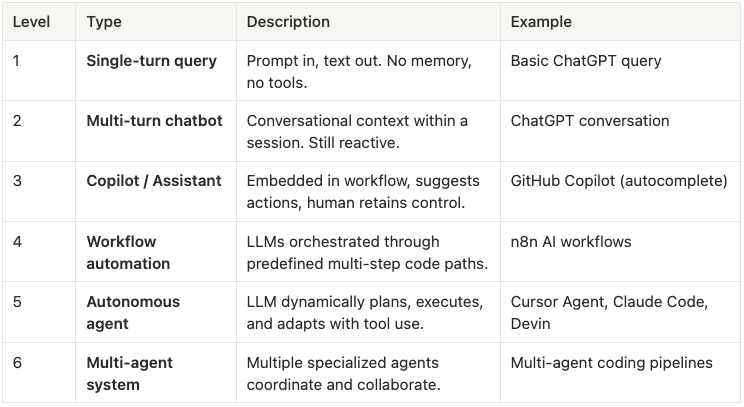

The most useful way to think about it is as a capability spectrum:

Table 1: The agentic AI capability spectrum, from single-turn queries to multi-agent coordination.

The critical differentiators that separate genuinely agentic systems from standard LLM usage are tool use (interacting with external systems like APIs, databases, browsers, and code interpreters), multi-step planning (decomposing goals into subtasks and executing them in sequence), autonomy (operating without constant human prompting or approval), and persistence (maintaining state and memory across interactions).

Or as one framework puts it simply: "Chatbots converse. Copilots assist. Agents act."

3. Where the Market Is Pulling Hardest

The clearest way to understand where agentic AI is headed is to look at where the demand is coming from. Not which startups are raising capital, but which real-world problems are pulling autonomous systems into production.

Before looking at any survey data or deployment reports, it is worth asking a more fundamental question: what conditions make a workflow ready for agents in the first place? Across the landscape, the use cases pulling hardest share four structural characteristics: high task volume that makes manual execution expensive, multi-step workflows that require tool use and cross-system coordination, objectively measurable outputs that allow agents to be evaluated, and significant labor costs that create immediate ROI when automation succeeds. Where all four conditions are present, agentic companies are growing fastest. Where one or more is missing, adoption is slower and less sticky.

The functions where agents are scaling fastest, according to McKinsey, are IT, software engineering, service operations, and marketing and sales. Databricks' 2026 State of AI Agents report, based on observed enterprise deployments, tells the same story from the usage side: market intelligence, customer experience, compliance, and healthcare knowledge management rank among the highest-volume agent use cases. Though each report uses its own taxonomy, the underlying pattern maps to the same set of domains. We have organized them into six categories based on where the structural demand is clearest.

Here are the six use cases driving the agentic economy today.

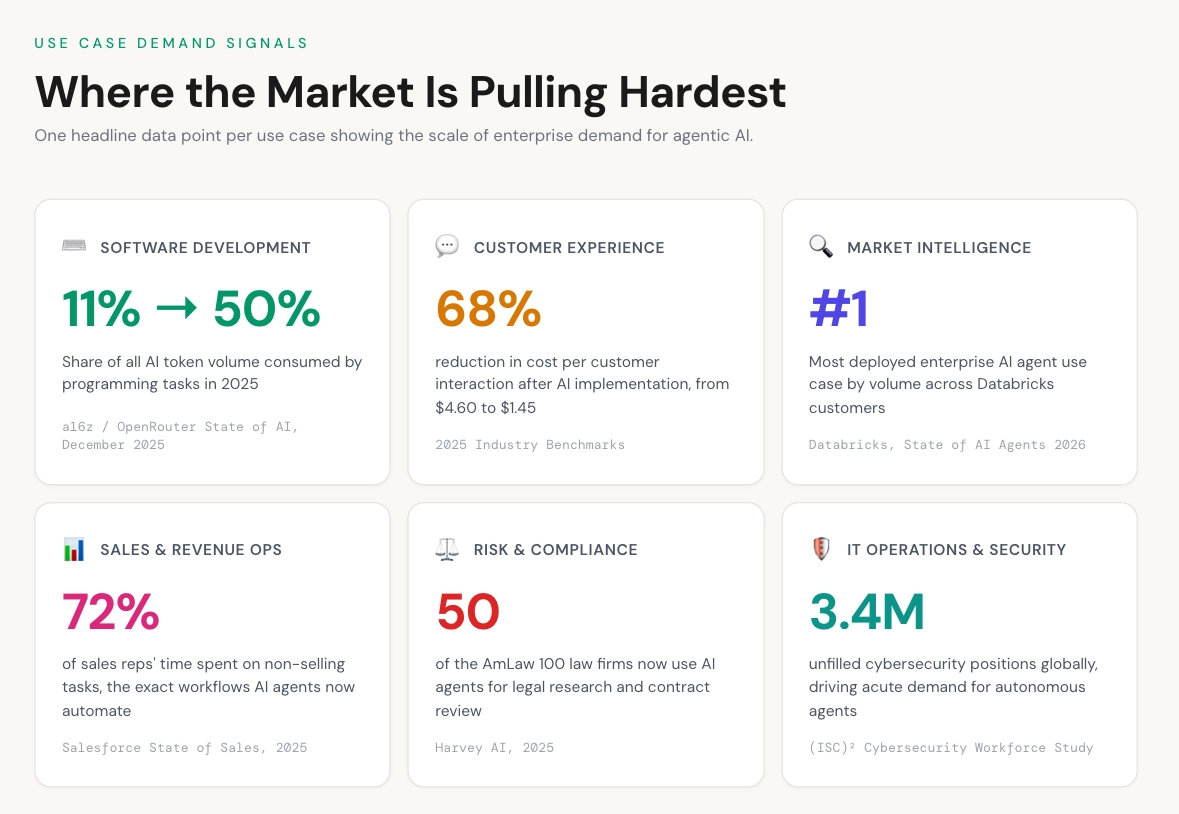

Figure 2: Headline demand signals across the six leading agentic AI use cases in 2025.

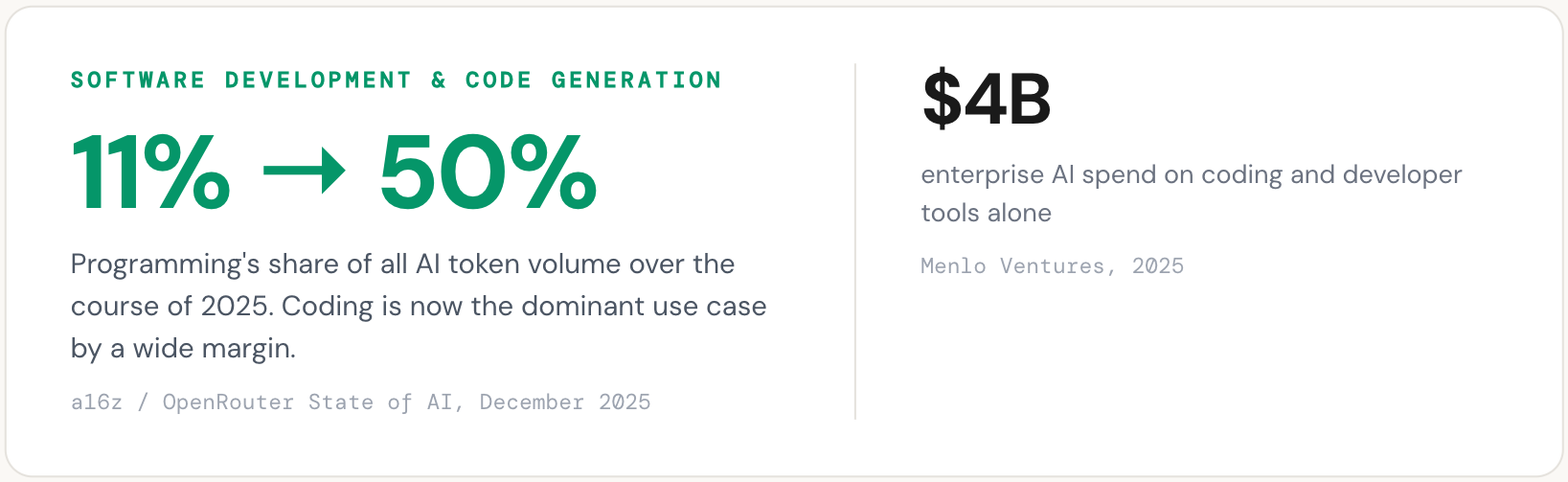

Software development and code generation

No use case has adopted agentic AI faster or more deeply than software development. The reason is structural: coding is one of the few knowledge workflows where output is objectively verifiable. Tests pass or fail. Code compiles or it doesn't. Errors surface immediately. This makes it an ideal environment for autonomous systems to operate with reduced human oversight.

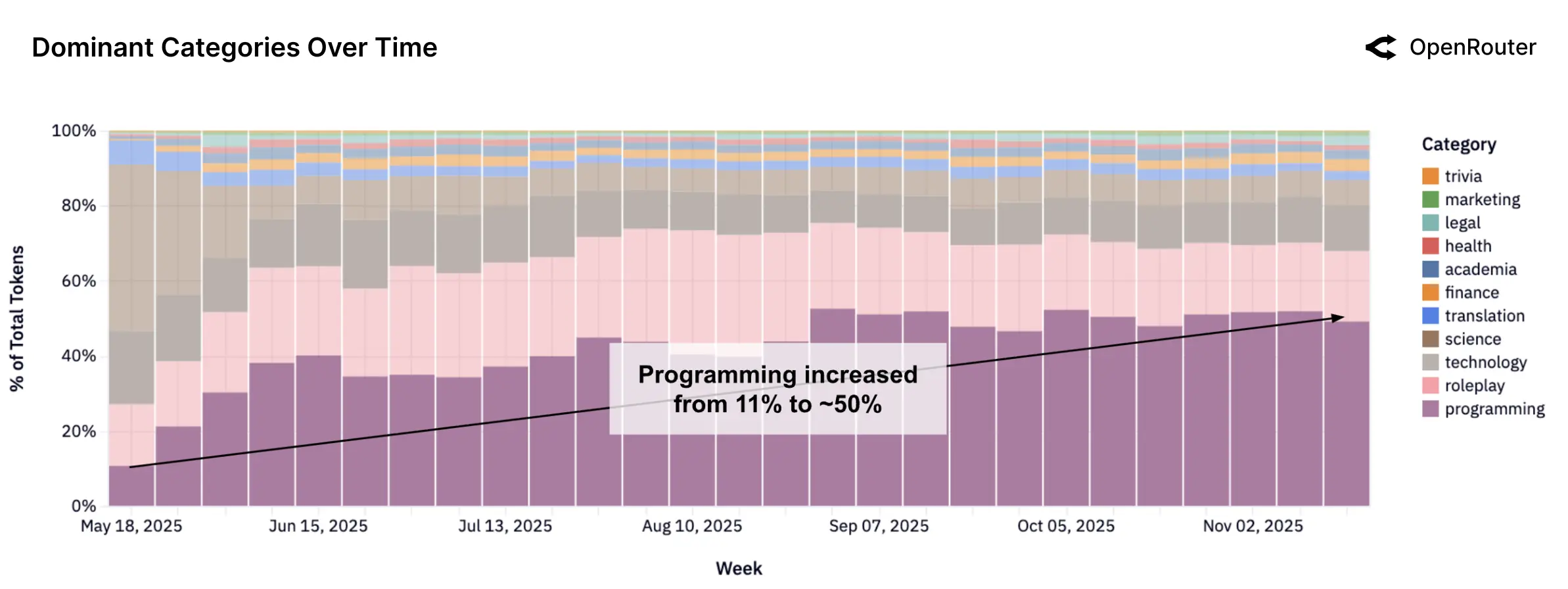

The demand signal is clear. Menlo Ventures' 2025 enterprise survey found that coding and developer tools accounted for $4 billion in enterprise AI spend, the single largest departmental category, and that more than half of all developers now use AI coding assistants daily. Programming tasks surged from 11% to approximately 50% of all token volume on the OpenRouter platform over the course of 2025, according to the a16z/OpenRouter State of AI study. Average prompt lengths in coding workflows quadrupled, reflecting a shift from simple autocomplete requests to complex, multi-file, multi-step instructions.

What developers are asking agents to do has changed substantially. Early AI coding tools offered line-level suggestions. Today's use cases include autonomous issue resolution (taking a GitHub issue from description to pull request), full application generation from natural language descriptions, codebase-wide refactoring, and continuous test generation. The companies building products for these workflows have seen revenue trajectories that reflect genuine demand pull. Cursor reached $100M ARR within 12 months of launch and scaled to $1B ARR in under two years. Replit saw revenue surge from under $3M to over $150M after launching its Agent product. Lovable went from zero to $200M ARR in approximately 14 months by targeting non-developers who want to build software through natural language.

The enterprise adoption data reinforces this further. Anthropic's empirical data from Claude Code shows that the longest autonomous coding sessions doubled from under 25 minutes to over 45 minutes between October 2025 and January 2026. Experienced users auto-approve agent actions at rates exceeding 40%. Developers are not just experimenting with agents. They are trusting them with longer, more complex tasks and stepping back from manual oversight.

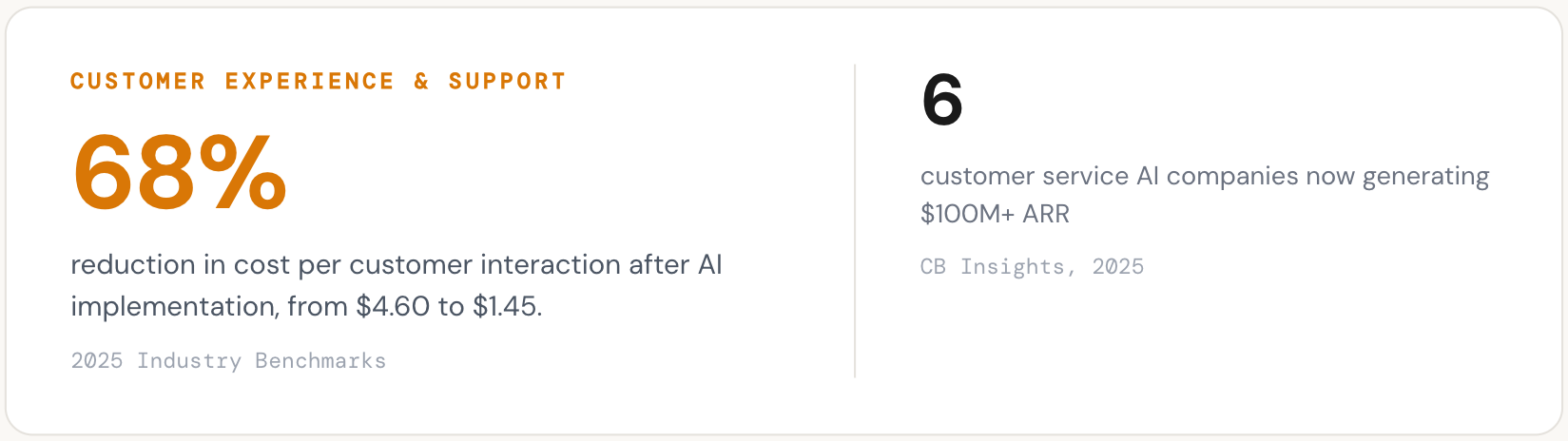

Customer experience and support

Customer experience is the second-largest agentic use case by revenue and arguably the one closest to full autonomous deployment. The economics are straightforward: AI-powered interactions now cost between $0.50 and $1.50, compared to $5 to $12 for a human-handled interaction. Industry benchmarks show a 68% reduction in cost per customer interaction after AI implementation, from $4.60 to $1.45 on average. For companies handling millions of support tickets annually, the ROI is immediate and measurable. Gartner projects that agentic AI will resolve 80% of customer service issues by 2029 with minimal human support.

The use case has evolved well beyond scripted chatbots. Today's customer service agents understand intent across channels, search internal knowledge bases, take actions in CRM and ticketing systems (issuing refunds, updating accounts, escalating cases), and handle multi-turn conversations that previously required trained human agents. The Databricks report confirms this breadth: customer support inquiry classification, customer advocacy, customer onboarding, and customer interaction summarization all rank among the top enterprise AI use cases by volume.

The market is already generating substantial revenue. CB Insights reports that six customer service AI companies now generate $100M or more in ARR, and the broader market is transitioning rapidly from AI-assisted to AI-resolved interactions, with vendors like Sierra AI, Intercom, and Decagon now charging per successful resolution rather than per seat. Sierra AI reached $100M ARR within 21 months using this outcomes-based pricing model. Intercom Fin, Ada, PolyAI, and Zendesk's AI agents are all competing for the same enterprise buyers. The shift from "AI-assisted" to "AI-resolved" is the key transition underway.

Market intelligence and strategic research

Databricks' analysis of observed enterprise agent deployments places market intelligence as the single largest use case by volume. This may surprise those who associate agentic AI primarily with coding, but it reflects a deep structural demand: every large organization needs to monitor competitive landscapes, track regulatory changes, synthesize earnings reports, analyze deal flow, and surface relevant insights proactively.

This is also the use case where "deep research" agents have found product-market fit. Products like OpenAI's Deep Research, Anthropic's extended research capabilities, Google's Gemini Deep Research, and Perplexity's autonomous search are not simple retrieval tools. They plan a multi-step research strategy, execute dozens of searches, synthesize across sources, evaluate conflicting information, and produce structured output. By any reasonable definition, they operate at Level 5 on the agentic capability spectrum: they dynamically plan, execute, and adapt with tool use and minimal human direction.

McKinsey's 2025 survey confirms the pattern from the enterprise side: knowledge management is one of the top two functions where organizations report scaling AI agents, alongside IT. Use cases like deep research in knowledge management, competitive analysis, and market monitoring have developed quickly. Glean, Hebbia, and Rogo (focused on financial research) are building agents that navigate and synthesize information across internal knowledge bases, documents, emails, and databases. On the external side, AI-powered research agents are beginning to blur the line between search engines and autonomous analysts.

The demand here is significant because the work being displaced is expensive. A junior analyst at a consulting firm or investment bank bills $150 to $300 per hour, and much of their time goes to research synthesis tasks that agents can now perform in minutes. As reasoning capabilities improve, this use case will expand from summarization into genuine analysis and recommendation.

Sales and revenue operations

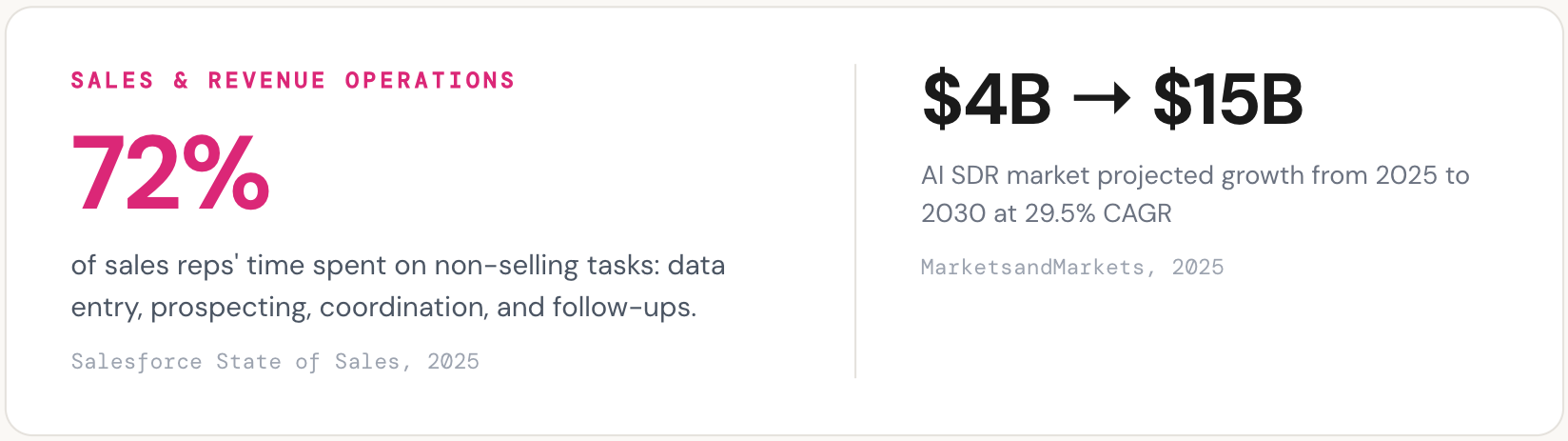

Sales is one of the clearest examples of autonomous AI agents delivering measurable value today. The workflow is naturally multi-step: identify prospects, research accounts, personalize outreach, qualify leads, schedule meetings, manage pipeline, and follow up. Each step involves different tools, different data sources, and high volume. A single enterprise sales team may need to process thousands of prospects per month.

The structural demand is significant. Sales reps today spend roughly 72% of their time on non-selling tasks: data entry, prospecting research, coordination, and manual follow-ups. This creates a large opening for agents that can handle the repetitive top-of-funnel work at machine speed while humans focus on relationship-building and deal closure. The AI SDR (sales development representative) market reflects this demand, projected to grow from $4.1 billion in 2025 to $15 billion by 2030 at a 29.5% CAGR according to MarketsandMarkets.

McKinsey's data shows that insurance leads all industries in the use of AI agents for marketing and sales, while revenue increases from AI use are most commonly reported in marketing and sales, strategy and corporate finance, and product development. Salesforce's Agentforce platform and Microsoft's Copilot for Sales are building agent capabilities natively into CRM systems. Startups like 11x and Artisan are building fully autonomous SDR agents that prospect, qualify, personalize outreach, and book meetings without human involvement. Sales teams adopting AI report 83% higher revenue growth compared to 66% for teams without AI.

The adjacent recruiting workflow follows a similar pattern. Sourcing candidates, screening resumes, assessing fit, scheduling interviews, and managing communication are all multi-step, tool-intensive tasks with high volume and measurable outcomes. Mercor, which builds recruiting agents, has reached $100M+ ARR with profitability, demonstrating that the same agentic approach that works in sales prospecting translates directly to talent acquisition.

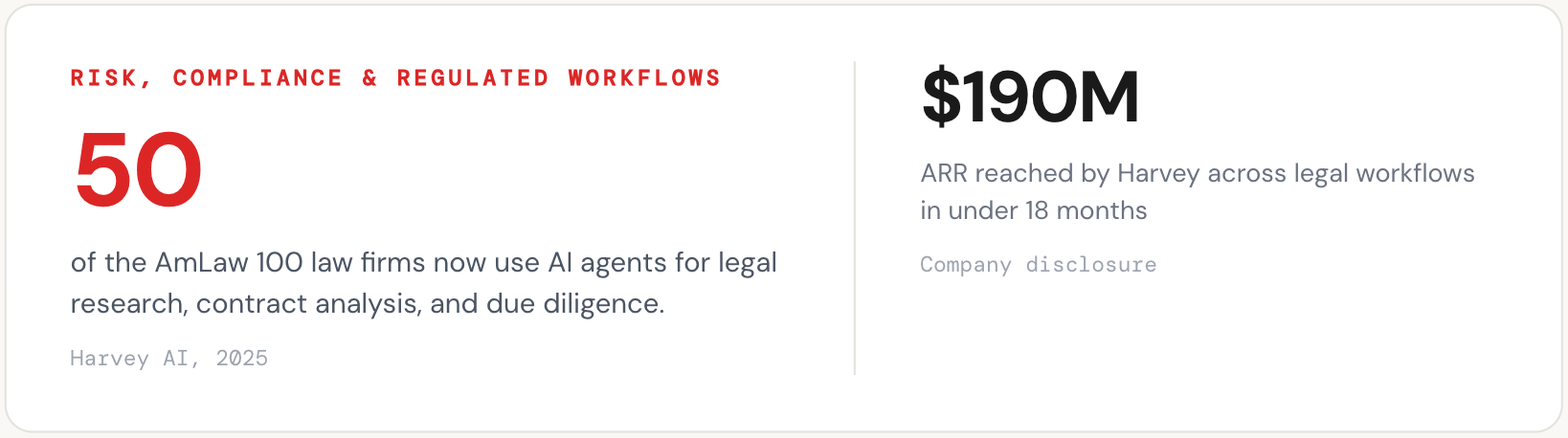

Risk, compliance, and regulated workflows

Legal, financial, and regulatory workflows are inherently multi-step and document-heavy: research a question across thousands of pages of case law, review a contract for risk clauses, cross-reference regulatory requirements, process insurance claims, screen transactions for anti-money laundering violations, audit financial statements for compliance. These are exactly the workflows where agentic systems provide value, and where the labor economics make automation compelling.

The Databricks report confirms this. Anti-money laundering, claim processing, regulatory reporting requirements, and loan origination all rank among the top enterprise AI use cases by observed volume. These are not experimental pilots. They are production deployments in some of the most regulated industries in the economy.

The adoption signal in legal is particularly notable. Harvey AI now serves 50 of the AmLaw 100 and over 100,000 lawyers, reaching $190M ARR across legal research, contract analysis, and due diligence workflows. The breadth of adoption across the most conservative, risk-averse institutions in the economy is itself strong evidence that the use case is real. Legal professionals are not early adopters by nature. When they move at this pace, it signals that the value proposition has cleared a high bar.

Healthcare compliance and clinical documentation follow a parallel pattern. The Databricks data shows medical literature synthesis and clinical note summarization as high-volume use cases. Companies like Ambience Healthcare ($243M Series C), EliseAI ($250M Series E), and Hippocratic AI ($126M) are building agents that handle clinical documentation, patient coordination, and administrative workflows in environments with stringent regulatory requirements (HIPAA, FDA).

What connects these seemingly different verticals is a shared set of characteristics: high document volume, structured processes, significant labor costs, regulatory requirements that demand consistency, and workflows where errors carry real consequences. These conditions create both strong demand for agents and a high bar for the reasoning capabilities those agents require.

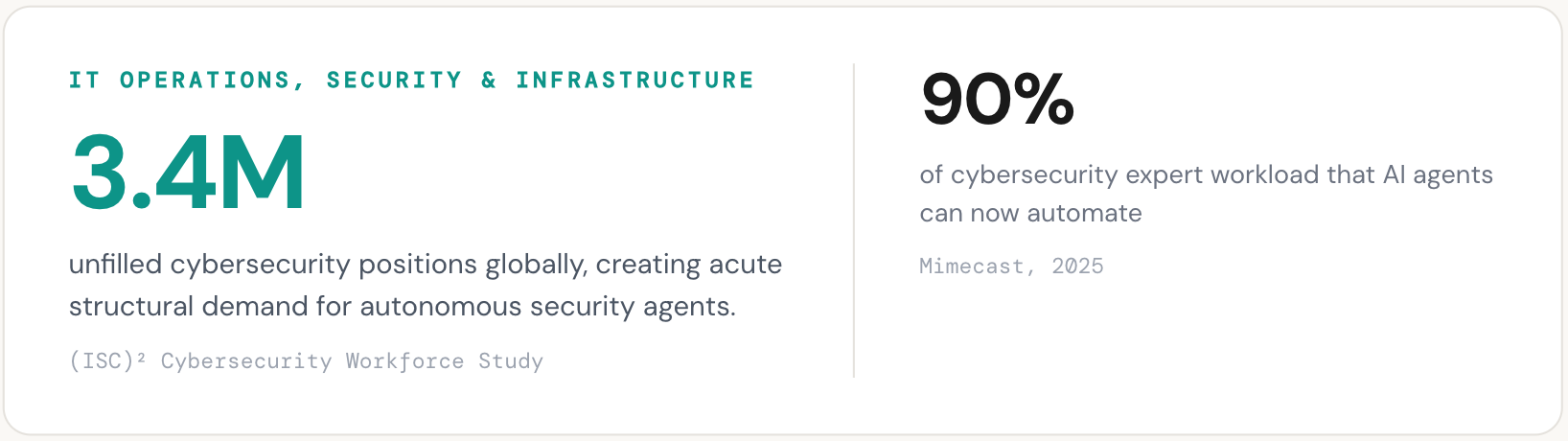

IT operations, security, and infrastructure

McKinsey's survey data shows IT as the single most common business function where organizations report scaling AI agents. This includes autonomous ticket resolution, infrastructure monitoring, incident response, and employee IT support. The use case is straightforward: IT service desks handle enormous volumes of repetitive requests (password resets, access provisioning, system troubleshooting), each requiring multiple steps across multiple systems.

ServiceNow's acquisition of Moveworks in early 2025, specifically for its agentic IT service desk capabilities, signals how large this opportunity is. The agentic approach goes beyond traditional ITSM automation: agents interpret requests in natural language, determine intent, coordinate across enterprise systems, and resolve issues end-to-end without human intervention.

Cybersecurity is a closely related and rapidly growing adjacent use case. Security operations centers face an overwhelming volume of alerts every day, and sorting critical signals from noise is slow, repetitive, and dependent on institutional knowledge. The global cybersecurity workforce faces a shortage of 3.4 million professionals, creating acute demand for autonomous systems that can scale defensive capacity. Half of organizations now use AI to redesign cybersecurity workflows, and 77% expect agents to become essential to security operations within the next few years.

Agents in this category autonomously scan for threats, investigate anomalies, isolate compromised systems, deploy patches, and reconfigure firewalls in real time. CrowdStrike's Charlotte AI, 7AI (which raised $130M in what was reported as one of the largest cybersecurity Series As), and Trend Micro are all building autonomous security agents. The combination of high alert volumes, severe talent shortages, and the speed requirements of modern threat response makes cybersecurity one of the strongest structural demand cases for agentic AI.

The pattern across use cases

These six categories span different industries, different buyers, and different technical requirements. But the underlying demand pattern is consistent. Enterprises are deploying agents where the work is high-volume, multi-step, measurable, and expensive to perform manually. The strongest adoption is happening where agents can be evaluated objectively (did the code pass tests, was the ticket resolved, was the claim processed correctly) rather than where output is subjective or open-ended.

This framework helps explain the current landscape. Software development, customer service, and IT operations lead because they score highest across all four dimensions. Market intelligence and sales are accelerating because reasoning model improvements are making agents capable enough to handle the complexity. Legal and compliance workflows carry the highest stakes per task, which creates both strong demand and the highest reliability requirements.

It also explains what comes next. As reasoning capabilities at the model layer improve, use cases that are currently too complex for reliable autonomous operation will become viable. The ceiling on what agents can do is set not by the scaffolding or the tooling, but by the depth and reliability of the reasoning underneath.

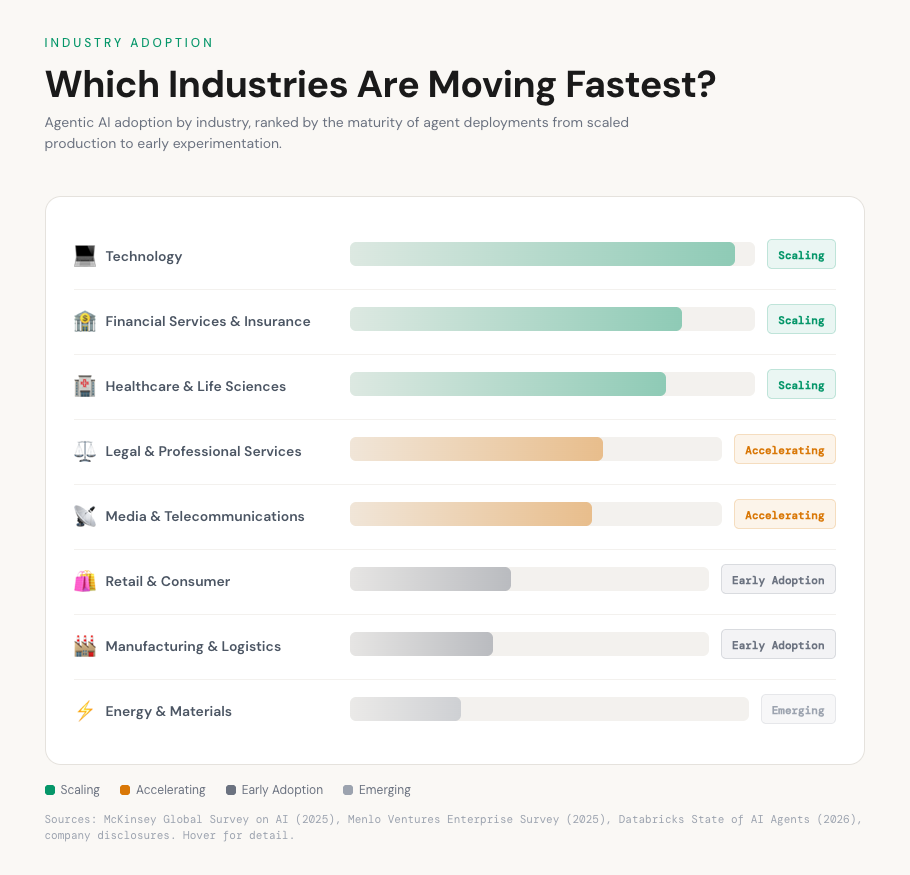

4. Which Industries Are Moving Fastest?

The use cases described above cut across industries, but adoption is not uniform. Some sectors are already scaling agents into production workflows. Others are still running pilots. The variation is not random. It correlates with three factors: the volume of document-heavy or data-intensive workflows in the industry, the severity of labor shortages or cost pressures, and the regulatory environment (which, counterintuitively, appears to accelerate rather than slow adoption once organizations commit).

McKinsey's 2025 Global Survey on AI provides the clearest industry-level data. Agent use is most advanced in the technology sector, where software engineering and IT report the highest levels of scaled deployment. But the surprise in the data is how quickly other industries are catching up.

Figure 3: Agentic AI adoption maturity by industry, ranked from scaled production deployments to early experimentation. Sources: McKinsey Global Survey on AI (2025), Menlo Ventures Enterprise Survey (2025), Databricks State of AI Agents (2026).

Technology is the furthest along, as expected. Twenty-four percent of technology companies have scaled AI agents in software engineering, the highest of any industry-function combination in the McKinsey survey. IT operations and product development follow closely. The feedback loops in software (tests pass or fail, code compiles or doesn't) make this industry a natural fit for autonomous systems.

Financial services and insurance are close behind, and in some functions ahead of tech. Insurance leads all industries in the use of AI agents for marketing and sales, according to McKinsey. The Databricks data confirms the pattern from the deployment side: anti-money laundering, claim processing, loan origination, and regulatory reporting are all high-volume enterprise use cases. The economics are compelling. A single large bank may process millions of transactions daily for compliance screening, and an insurance carrier may handle hundreds of thousands of claims per year. These are exactly the conditions where agents create measurable value. The financial services sector's comfort with quantitative evaluation and its existing investment in data infrastructure have made it one of the faster-moving regulated industries.

Healthcare and life sciences present one of the most interesting adoption patterns. Menlo Ventures' 2025 survey found that healthcare is adopting AI 2.2x faster than the broader economy, driven by a convergence of acute labor shortages, extreme documentation burdens, and rising patient volumes. Clinical note summarization, medical literature synthesis, patient coordination, and administrative workflows all rank among the top enterprise use cases in the Databricks data. The funding reflects this demand: Ambience Healthcare raised a $243M Series C, EliseAI raised a $250M Series E, and Hippocratic AI raised $126M, all building agents for clinical and administrative healthcare workflows. The regulatory complexity in healthcare (HIPAA, FDA) makes the reliability bar high, but it also means that once an agent clears that bar, the moat is significant.

Legal and professional services are accelerating rapidly despite the industry's reputation for conservatism. Harvey AI serving 50 of the AmLaw 100 is the most visible signal, but the pattern extends beyond a single company. Law firms, consulting firms, and audit practices are all deploying agents for research, document review, and compliance workflows. The billable-hour economics of professional services make the ROI case particularly clear: when associates bill $400 to $800 per hour and spend a significant portion of their time on tasks agents can perform in minutes, the value proposition is immediate.

Where adoption is lagging. Retail, manufacturing, and energy are earlier in their adoption curves. McKinsey's data shows that 73% of advanced manufacturing respondents are not yet using AI agents in product development at all. Retail has high-volume customer service use cases that are moving forward, but deeper supply chain and inventory optimization agents remain largely in pilot. Energy and materials are growing from a low base, with most agent deployments focused on asset monitoring and regulatory compliance rather than core operations.

The pattern is worth noting for what it reveals about the trajectory. The industries moving fastest share a common profile: high document volumes, expensive labor, regulatory requirements that demand consistency, and workflows where output quality is measurable. As model reasoning capabilities improve, industries that are currently in early adoption (where the complexity of tasks exceeds current agent reliability) will follow the same curve. The question is not whether these industries will adopt agents, but how quickly the models underneath can meet their reliability requirements.

5. The Compute Footprint of Agentic AI: More Tokens, Better Models

The shift to agentic AI isn't just visible in use case adoption and industry demand. It's measurable in the raw compute being consumed.

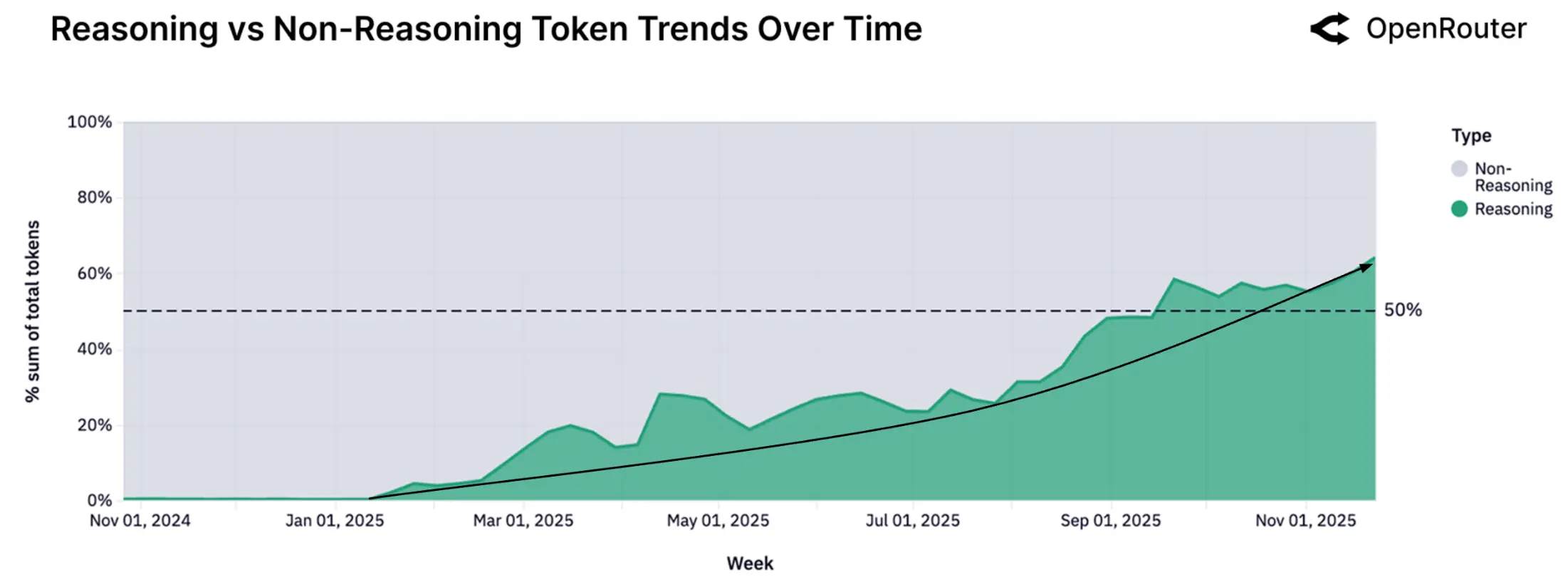

The most comprehensive empirical dataset on this shift comes from the a16z and OpenRouter "State of AI" study, published in December 2025. The study analyzed metadata from over 100 trillion tokens routed across more than 5 million developers, 300+ models, and 60+ providers, making it the largest publicly available window into how AI is actually being used.

The headline finding: the share of tokens flowing through reasoning-optimized models climbed from effectively zero in early 2025 to over 50% by late 2025. This is a structural shift in how developers and enterprises are using AI. Reasoning models (like OpenAI's o-series and DeepSeek R1) consume significantly more tokens per task because they "think" through problems step by step before producing output. Their rapid adoption signals that users are moving from simple question-and-answer interactions toward complex, multi-step workflows that demand deeper inference.

Figure 4: Reasoning versus non-reasoning token share over time. Source: a16z/OpenRouter State of AI, December 2025.

At the same time, the mix of what those tokens are being used for has changed dramatically. Programming tasks surged from 11% to approximately 50% of total token volume over the same period, making coding the dominant use case by a wide margin. Average prompt lengths quadrupled, another indicator that users are submitting richer, more complex instructions rather than short queries.

Figure 5: Token consumption by category over time, showing programming's surge from 11% to approximately 50%. Source: a16z/OpenRouter State of AI, December 2025.

Taken together, these trends paint a clear picture. AI usage is shifting from lightweight, conversational interactions toward heavy, agentic workloads that consume far more compute per task. The implications for infrastructure, pricing, and model development are significant: the demand curve for inference compute is steepening faster than most forecasts anticipated.

The token multiplier effect

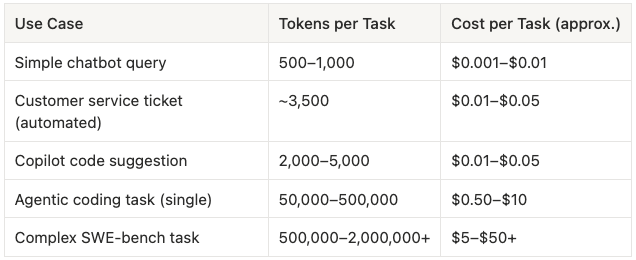

This matters because agentic workloads consume tokens at a fundamentally different rate than chatbot interactions.

Table 2: The token multiplier effect, comparing token consumption across AI use cases from simple chatbot queries to complex agentic coding tasks.

A simple chatbot query uses 500 to 1,000 tokens. A copilot code suggestion uses 2,000 to 5,000. But a single agentic coding task can consume 50,000 to 500,000 tokens, and a complex benchmark-level task can burn through 500,000 to over 2 million. That is a 100x to 1,000x multiplier over a standard conversational interaction.

The real-world usage data confirms this. The average Cursor user consumes approximately 1.3 million tokens per month. Power users hit 7 million tokens monthly. Heavy Claude Code users exceed $35,000 per month in inference costs on premium plans. Jensen Huang framed the multiplier effect in concrete terms: a human user might make roughly 50 queries per day, while an autonomous agent performing the same function could make 50,000 API calls daily, a 1,000x increase.

What this means for the ecosystem

This token consumption is already reshaping provider economics. The AI labs selling inference (Anthropic, OpenAI, Google) are seeing API revenue accelerate sharply, driven disproportionately by agentic coding and developer workflows. Anthropic, whose revenue mix skews roughly 70% API, has grown particularly fast as a primary provider behind many coding agents. OpenAI's API business is also accelerating, with Sam Altman disclosing in January 2026 that the API alone added over $1B in annualized recurring revenue in a single month.

But the broader point is not about any single provider. It is that the inference demand curve created by agentic workloads is steepening faster than most forecasts anticipated, and it is being driven by the same use cases outlined earlier: software development, customer service, market intelligence, and compliance workflows. As agents take on longer, more complex tasks, the compute required per task will continue to grow. This creates both an infrastructure challenge and an economic opportunity for the companies providing the reasoning underneath.

The connection between token consumption and model capability is worth stating directly. Agents consume more tokens because they reason through problems in multiple steps. The more capable the reasoning, the more steps the agent can reliably execute, and the more tokens it consumes. This is not a cost problem to be minimized. It is a demand signal. Rising token consumption is evidence that users are trusting agents with increasingly complex work, and that the economic value of that work justifies the compute cost. The companies that can deliver deeper, more reliable reasoning per token will capture a disproportionate share of this growing demand.

The model churn signal

There is another pattern in the token data that is easy to overlook but important for understanding where this market is headed: users are not loyal to models. They are loyal to capability.

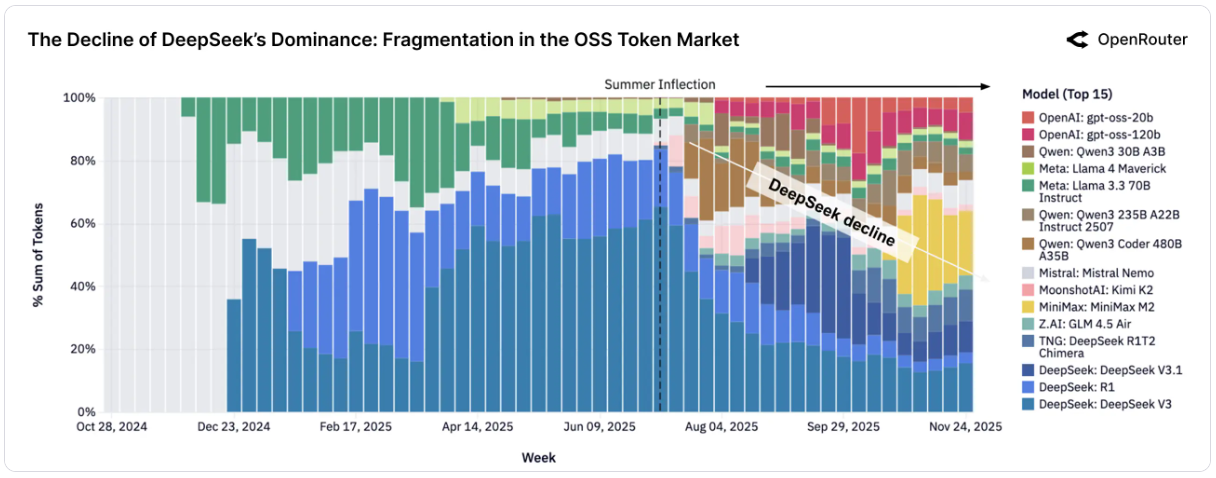

The OpenRouter data shows this clearly. In early 2025, DeepSeek held nearly 80% of all open-source token share on the platform, driven by the dominance of its V3 and R1 models. By late 2025, that share had dropped below 25%, not because DeepSeek got worse, but because capable alternatives arrived. Qwen, MiniMax, Moonshot's Kimi, and others each captured meaningful share within weeks of launch. As of early 2026, MiniMax M2.5 sits at #1 on the OpenRouter leaderboard with 1.59 trillion tokens, a model that had zero usage just months earlier. The pattern repeats with each wave: a new state-of-the-art model launches, usage spikes sharply, share redistributes, and the previous leader's dominance erodes.

Figure 6: Open-source model market share over time, showing the shift from DeepSeek's near-monopoly in early 2025 to a fragmented, competitive landscape by late 2025. Source: a16z/OpenRouter State of AI, December 2025.

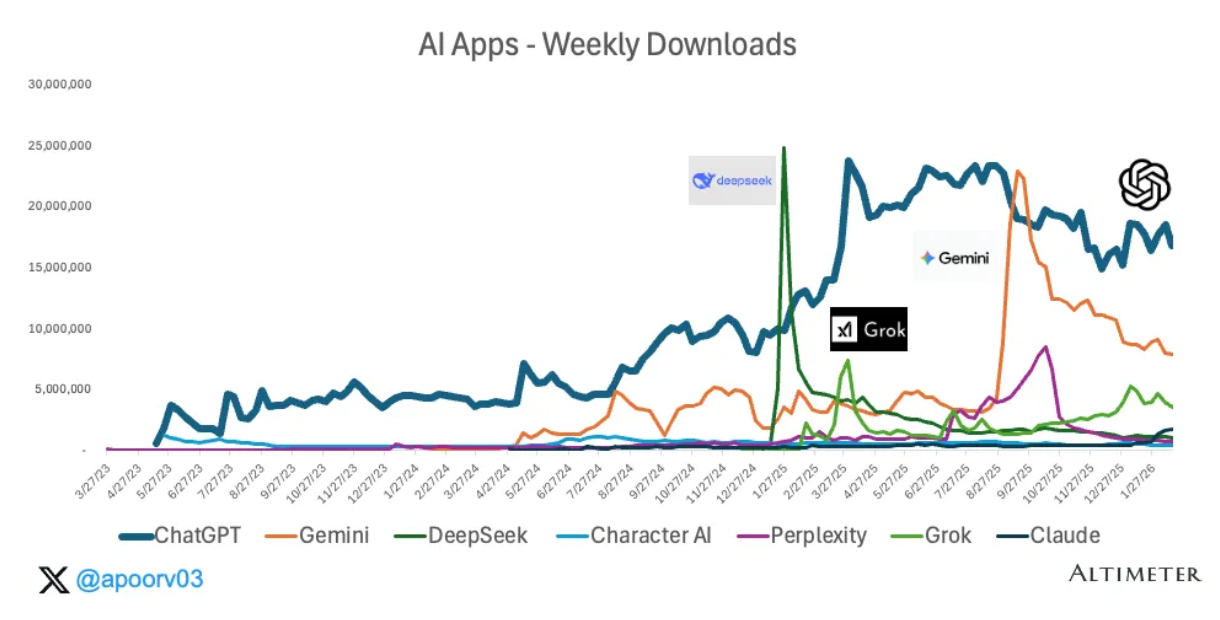

The consumer side tells a similar story. Apoorv Agrawal's analysis of AI app download data shows what he describes as "four seasons" of AI challengers in 2025. DeepSeek surged past 25 million weekly downloads in January, only for usage to fall back within weeks. Grok spiked in the spring. Gemini had its moment in the summer, driven by a viral image generation release. Each wave grabbed headlines and downloads, then receded. The download chart is a story of sharp spikes and rapid decay, where every new capability release triggers a wave of users willing to switch.

Figure 7: AI app weekly downloads, showing the spike-and-decay pattern as each new model launch triggers a wave of users willing to switch. Source: Apoorv Agrawal / Sensortower, March 2026.

The a16z/OpenRouter study frames this as structural fragmentation. Where one model family once dominated, half a dozen now sustain meaningful share. No single open-source model holds more than roughly 20 to 25% of tokens consistently. Capable new models can capture significant usage within weeks of launch. The report notes that retention patterns are increasingly influenced by "breakthrough moments," adding that when a new capability meaningfully changes what users can do, they switch and do not switch back.

What this reveals is that switching costs in the model layer are low, and users (both developers and enterprises) are actively searching for better reasoning. The market is not locked in. When a model delivers a measurable improvement in capability, whether in reasoning depth, coding accuracy, or task completion rate, users migrate to it quickly. This is not benchmark tourism. The OpenRouter data shows that when usage spikes align with genuine capability gains, adoption sticks. When they are driven by hype alone, it fades.

For the agentic ecosystem, this has a direct implication. The companies and products that deliver better model-layer reasoning will not struggle to find adoption. The demand signal is already there: users are actively cycling through models looking for the one that can handle their workflows more reliably. The bottleneck is not distribution or awareness. It is capability.

6. Where the Money Is Flowing

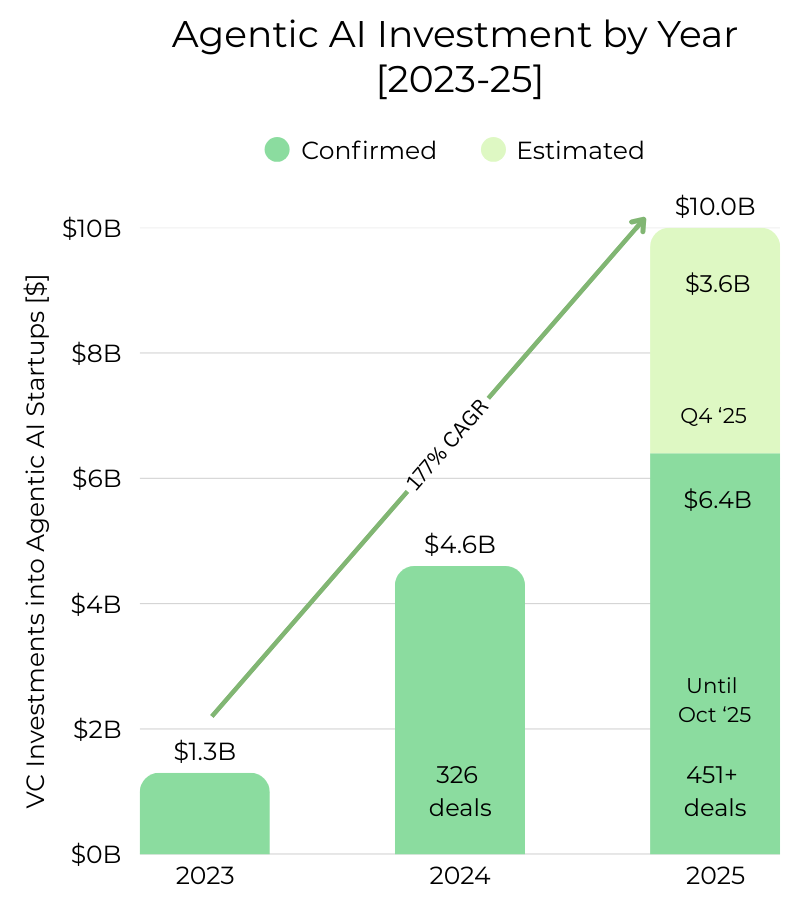

The investment community has committed significant capital to agentic AI, and the pace of acceleration has exceeded most expectations.

PitchBook tracked $6.4 billion across 451+ deals through mid-October 2025. But that number understates the full picture. Q4 2025 was an exceptionally active quarter for AI venture capital broadly (CB Insights recorded $83.2 billion in total AI funding that quarter alone), and agentic AI rode the same wave. Several of the largest deals of the year closed after the October cutoff. Accounting for Q4 activity, we estimate full-year 2025 investment in agentic AI startups reached approximately $10 billion.

The growth trajectory from 2023 to 2025 tells the story: roughly $1.3 billion in 2023, $4.6 billion in 2024, and an estimated $10 billion in 2025, representing a 177% compound annual growth rate.

To put this in context, total AI venture capital in 2025 reached $202 to $259 billion depending on the source. Agentic AI represents roughly 4 to 5% of total AI dollars but approximately 10% of all AI deal count, according to Prosus. The deals are smaller on average than foundation model mega-rounds, but far more numerous, suggesting a broader and healthier competitive landscape than the winner-take-most dynamics seen in foundational model funding.

Figure 8: Agentic AI venture capital investment by year, 2023 to 2025. Confirmed funding through October 2025 per PitchBook; Q4 2025 estimated based on announced deals.

Where capital is concentrating

The distribution of investment across agent categories reveals where investors see the strongest near-term demand and the largest long-term markets.

Coding and developer tool agents attracted the largest share of capital by a wide margin, with over $5 billion in combined funding in 2025 alone. This includes Cursor's $900M Series C and $2.3B Series D (reaching a $29.3B valuation), Cognition (Devin) raising $1.2B+ across three rounds, Poolside AI raising approximately $2B with NVIDIA contributing up to $1B, and Lovable raising $530M across two rounds. The concentration of capital in coding reflects both the maturity of the use case and investor conviction that AI-native development tools will become the default way software is built. It also reflects the verifiability of output in coding workflows, which makes it easier for investors to evaluate agent performance and product-market fit.

Customer experience and support agents drew significant investment as the category closest to full autonomous deployment. Sierra AI raised $350M at a $10B+ valuation, and Decagon raised $381M across two rounds within seven months, reaching a $4.5B valuation. CB Insights reports that six companies in this category now generate over $100M in ARR. The shift toward outcomes-based pricing (charging per resolved issue rather than per seat) is creating a new economic model for customer service that aligns vendor incentives directly with enterprise ROI.

Legal, compliance, and regulated workflow agents saw Harvey AI raise three rounds in a single year (Series D, E, and F) totaling $760M, reaching an $8B valuation by December. The speed and scale of Harvey's fundraising reflects the depth of demand in legal workflows, where the combination of high document volumes, expensive labor, and regulatory requirements creates a strong pull for autonomous systems. Adjacent compliance and financial services workflows (anti-money laundering, claims processing, regulatory reporting) are drawing additional investment, though many of these deployments are being built by incumbents rather than venture-backed startups.

Sales and revenue operations agents represent a fast-growing investment category. The AI SDR market is projected to grow from $4.1 billion in 2025 to $15 billion by 2030, according to MarketsandMarkets. Startups like 11x and Artisan are building fully autonomous sales development agents, while Mercor raised $450M for recruiting automation, reaching a $10B valuation. Salesforce and Microsoft are also investing heavily in embedding agentic capabilities into their CRM platforms, signaling that this category will see competition from both startups and incumbents.

Cybersecurity agents emerged as a distinct funding category in 2025, anchored by 7AI's $130M Series A, one of the largest cybersecurity seed-stage rounds on record. The structural demand is clear: 3.4 million unfilled cybersecurity positions globally, rising alert volumes, and the speed requirements of modern threat response create acute need for autonomous systems. CrowdStrike, Trend Micro, and other established security vendors are also building agentic capabilities into their platforms.

Market intelligence and research agents are receiving investment through both venture-backed startups (Glean, Hebbia, Rogo) and through the deep research capabilities being built by the major AI labs themselves. OpenAI's Deep Research, Anthropic's extended research mode, and Google's Gemini Deep Research represent significant R&D investment in autonomous research agents, even though this investment doesn't show up in traditional VC statistics. The Databricks data showing market intelligence as the #1 enterprise agent use case by volume suggests this category may be underfunded relative to its actual demand.

Healthcare and life sciences agents attracted notable funding, with Ambience Healthcare ($243M Series C), EliseAI ($250M Series E), and Hippocratic AI ($126M) building agents for clinical documentation, patient coordination, and administrative workflows. Menlo Ventures found that healthcare is adopting AI 2.2x faster than the broader economy, but the regulatory complexity (HIPAA, FDA) means the capital requirements for building compliant agents in this space are higher than in less regulated categories.

What the investment pattern reveals

The distribution of capital mirrors the use case demand pattern from Section 3. Funding is flowing most heavily into the categories where agents have demonstrated the clearest product-market fit: coding, customer service, and legal workflows. These are the use cases where output is verifiable, ROI is measurable, and enterprises are already paying at scale.

But notably, the fastest-growing investment categories (cybersecurity, sales automation, market intelligence) are also the ones where the gap between current agent capabilities and full production autonomy is widest. Investors are not just funding what agents can do today. They are betting on the trajectory of the reasoning improvements that will unlock the next wave of use cases. This is a bet on the model layer, and it connects directly to the question at the center of this analysis: what is still missing?

7. What’s Still Missing - Better Test-Time Reasoning

The data makes a compelling case that agentic AI is real and scaling fast. But there is still a gap between where agents are today and where they need to be for full production autonomy.

That gap is often described as "reliability." In practice, it is a reasoning problem, specifically a problem with how models think during inference. Test-time reasoning refers to the model's ability to work through problems in the moment, during actual task execution, rather than relying solely on patterns learned during training. When an agent encounters an unexpected error, an ambiguous instruction, or a step that didn't go as planned, it needs to reason its way forward in real time. That capacity is what separates a useful demo from a dependable production system.

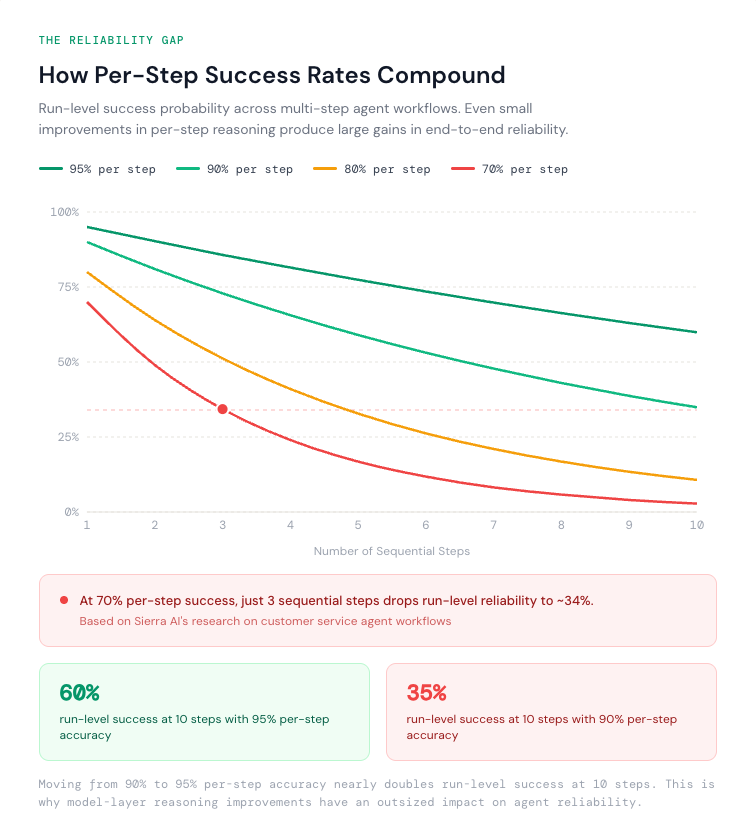

Current agents can look impressive on any single attempt, but production systems are judged on run-level success across multi-step workflows. When tasks require a sequence of correct decisions, small failure rates compound quickly.

Sierra AI's research on customer service agents illustrates the dynamic. An agent that succeeds on roughly 70% of individual interactions can feel production-ready in a demo. But the moment a resolution requires multiple interactions to go right in a row, the odds of a clean run drop fast. Even at just three consecutive interactions, 70% per interaction becomes roughly 34% run-level success. Demos are convincing. Sustained, unsupervised operation is a different standard entirely.

Figure 9: How per-step success rates compound across multi-step agent workflows. Calculated as P(run) = P(step)n where n is the number of sequential steps. Sierra AI's customer service research demonstrates the 70% → 34% decay at 3 steps.

This run-level reasoning gap shows up across every deployment category. Coding agents that solve benchmark problems still stumble on multi-file, multi-step tasks in real codebases. Customer service agents handle routine queries well but misroute or hallucinate on edge cases. CRM agents, as Salesforce's own research shows, succeed on only about 55% of professional-grade tasks.

The specific reasoning capabilities that remain underdeveloped are well understood. Long-horizon planning across 10 or more sequential steps, where the agent must maintain a coherent strategy from start to finish. Self-correction, where the agent recognizes that an intermediate step produced a bad result and adjusts its approach rather than compounding the error. Error recovery, where the agent encounters an unexpected state (a failed API call, an ambiguous user input, a missing piece of data) and reasons its way to a viable alternative path. And goal persistence, where the agent maintains its original objective across an extended task chain without drifting, forgetting context, or losing track of what it was trying to accomplish. These are not scaffolding problems or tooling problems. They are model-layer reasoning problems that manifest at inference time.

The pattern is consistent: agents are capable enough to augment human workflows today, but not yet dependable enough to fully replace them. Closing this gap will depend less on wrappers and scaffolding alone, and more on improving the underlying models' ability to reason at test time, especially across longer horizons and messy, real-world sequences.

8. Conclusion: Agents Are Here, And the Best Is Yet to Come

The agentic AI landscape has moved from "interesting prototypes" to something you can measure in the real economy.

Across the market, the signals are consistent. We see it in how agenticness itself is being defined and debated, in where agents are being deployed, which industries are moving first, how developers are spending tokens, and where venture dollars are concentrating. Different lenses, same story: teams are no longer asking whether agents are useful. They are asking how quickly they can put agents into workflows that matter.

This blog walked through the evidence from multiple angles because no single metric tells the whole story. Use cases show what agents are being trusted to do. Industry patterns show where ROI is clearest today and where adoption is catching up next. Token spend shows what people actually run in production. Venture flow shows what builders and investors believe the next frontier will be. Together, they point to a market that is not just emerging, but actively pulling agents into real work.

And yet, the ceiling is showing up in the same place again and again. Agents are capable enough to augment workflows, but they are still too inconsistent to operate autonomously across long, messy, multi-step sequences. The gap between a great demo and a system that can run unsupervised at scale is still defined by the underlying model's reasoning at test time. That is the problem we are focused on at Voaige. We are building a test-time cognition layer that helps LLMs reason better at inference without requiring retraining data. Our goal is clear: push models beyond their default capability when it matters most, while they are actually doing the work.

The agentic era is here. The market is demanding agents across more tasks, more teams, and more production contexts. The next wave will be shaped by who can raise run-level performance, not just make agents easier to orchestrate. We believe test-time reasoning is the lever, and we are building for that moment.

Sources

McKinsey, "The State of AI in 2025," November 2025

Databricks, "State of AI Agents 2026," January 2026

a16z & OpenRouter, "State of AI: An Empirical 100 Trillion Token Study," December 2025

Menlo Ventures, "2025: The State of Generative AI in the Enterprise," December 2025

Gartner, "Top 10 Strategic Technology Trends for 2025"

PitchBook, "AI Agent VC Trends," October 2025

CB Insights, "State of AI Q4 2025" and "Customer Service AI Market Share 2025"

MarketsandMarkets, "AI SDR Market Global Research Report," 2025

Anthropic, "Building Effective Agents"

Sacra, "Anthropic Revenue, Valuation & Funding"

Sherwood News, "Anthropic On Track for $9 Billion Annual Revenue"

(ISC)² / BCR Cyber, Cybersecurity Workforce Study, 2025

Company announcements, earnings disclosures, and funding round reporting